Last week I wrote about how the Internet of Things will provide an opportunity for various bureaucracies (corporate and governmental) to inject not only their information-gathering functions but also their rule-imposing functions ever more deeply into the technologies that surround us, and thus into our daily lives. In short, violating our privacy and increasing their control. But the situation is actually even scarier than that, because buried within the activity of "rule imposing" lies another function that is inherently a part of that: "judgment making." And a whole lot more trouble lies there.

As I argued last week, computers and bureaucracies share many similarities. Both take input in standardized form, process that data according to a set of rules, and spit out a result or decision.

But they also share similar weaknesses. Computers are mentally “stiff” and prone to laughable, child-like errors that even the dumbest human would never make. Computers can be mindless and inflexible, unforgetting and unforgiving, yet simultaneously quirky and error-prone. Bureaucracies are also notorious for their mindless application of rules and the dumb, irrational decisions that often result.

Both bureaucracies and today’s computers face the same fundamental limitation: they are unable to cope with the boundless variety of human life. Both try to jam the rich diversity of human life and individual circumstances into a finite set of rigid categories. But because human existence is so varied, individuals cannot be assured of fair treatment unless they can be judged according to their individual circumstances, on a case-by-case basis, by broadly intelligent, flexible minds exercising discretion. (I have touched on these themes in prior blog posts, including here and here.)

Judges and discretion

In the 19th century, American judges often envisioned themselves as kinds of computers or automatons, with the job of impersonally applying the law to individual cases, with no room for personal judgment. As legal historians have described, this naïve formalist view was challenged in the early 20th century by the more sophisticated Legal Realists, who understood what was to many a radical, mind-blowing concept: general rules cannot be applied to particular circumstances without the use of discretion. In other words, because general rules can never cover the vast variety of unique circumstances that crop up in real human life, applying those rules to a given case of any complexity can never be done objectively or automatically—there’s no escaping the need to use judgment. No algorithm will be sufficient, whether that algorithm is applied by a computer, a bureaucracy, or a 19th century judge.

Another way of putting it is that no matter how detailed a set of rules is laid out, no matter how comprehensive the attempt to deal with every contingency, in the real world circumstances will arise that will break that ruleset. Applied to such circumstances the rules will be indeterminate and/or self-contradictory. This is sort of a Godel’s incompleteness theorem of law.

As libertarian conservatives (such as Frederick Hayek and Ludwig Von Mises) pointed out, this insight poses a serious problem for the concept of the “rule of law not men.” How can the law be applied fairly, impartially, and predictably when we recognize that judges must use discretion to apply it? (And "judge" here means anyone who must apply or enforce rules in individual circumstances, including bureaucratic clerks and the cop on the beat as well as proper judges.) What is the difference between a judge free to exercise her own discretion, and a dictator? How can businesses and individuals act with the confidence that they understand the law when it can never be pinned down?

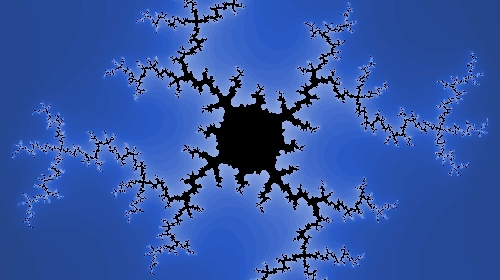

This is a knotty conundrum of administrative law (but one that won’t go away by trying to deny its existence as some conservatives would do). The way I think about it is that rules are like fractals: they have an undeniable basic shape, and there are always points that clearly lie inside their boundaries and points that clearly lie outside, but they’re fuzzy at the margins. From afar, those boundaries appear solid, but upon close examination they dissolve into an impossibly complex, ever-receding tangle of branches and sub-branches. And we must be alert to the possibility that even a point that appears to fall far inside or outside the boundary may not be what it seems.

So far the best that humans have come up with is what might be described as "guided discretion." First, judges must work within the core currents of the law, but apply their own judgment at the margins. Second, such discretion must be subject to review and appeal, which, while still vulnerable to mass delusions and prejudices such as racism, at least smoothes over individual idiosyncrasies to minimize the chances of unpredictably quirky rulings.

This duality—a rough, macro-level outline of hard law with soft individual judgment and discretion at the edges—is essential if we want individuals to be treated fairly. On the one hand, if we allow judges (or police officers, or clerks) to work without the guiding framework of law, they become nothing more than petty tyrants, and we become totally vulnerable to their racism or other prejudices, quirks, and whims. On the other hand, if we remove all discretion, the inevitable result is injustice, such as resulted in the United States when Congress took away judicial discretion over sentencing. (Just one example: a federal judge haunted by a 55-year-sentence he was forced to give a 24-year-old convicted of three marijuana sales. See also this discussion.)

As computers are deployed in more regulatory roles, and therefore make more judgments about us, we may be afflicted with many more of the rigid, unjust rulings for which bureaucracies are so notorious. The ability to make judgments, and account for extenuating circumstances, is one that requires a general knowledge of the world; it is what computer scientists call an "AI-complete problem," meaning that programming a computer go do them is basically the same problem as programming a computer with human-level intelligence.

Escape valves

For all their similarities, there's an important way that bureaucracies are actually the opposite of computer programs. Computers’ basic building blocks are exceedingly simple operations, such as “add 1 to the X Register” and “check if the X Register is bigger than the Accumulator.” Despite this simple machine-language core, when millions of those operations are piled one on top of one another, we get the amazingly (and often frustratingly) subtle and unpredictable behavior of today’s software. Preschool-level mathematical operations, when compiled in great numbers, together produce a mind far smarter than the constituent elements.

Bureaucracies’ building blocks, on the other hand, are highly intelligent human beings who, when assembled, form a mind that is often far dumber than its constituent parts. The madness of mobs is a cliché, but the same principle applies to the seeming opposite of a mob: the cold cubicles of a bureaucracy. It may be made up of intelligent beings, but the emergent intelligence that results from the interaction of those beings can be considerably less smart than any one cog in the machine, making judgments that any one member of the bureaucracy, if extracted from his or her role as cog, could instantly perceive as off-base.

Part of the reason is that humans who work in a bureaucracy are forced to limit the application of their intelligence—to become dumber. Max Weber, one of the first to think deeply about bureaucracy, noted that

Its specific nature . . . develops the more perfectly the more the bureaucracy is “de-humanized,” the more completely it succeeds in eliminating from business love, hatred, and all purely personal, irrational, and emotional elements which escape calculation.

A bureaucrat is a person who is more dedicated to the operation of the machine—the process—than to the substantive goal to which the machine is supposed to be dedicated. The legal equivalent is the judicial conservative who, terrified of admitting any role for discretion, is more committed to “procedural due process” than to “substantive due process”—i.e. if the guy got a proper trial, then it is not a justice’s job to care whether or not he might actually be innocent before we execute him. For a computer, of course, there is nothing BUT process—there is no question of caring about substantive ends because computers don’t know anything about such things.

Bureaucracies are constituted by humans, however, and not all humans cower in the illusory shelter of objective process. As a result, bureaucracies often have something that computers do not: logical escape valves. When the inevitable cases arise that break the logic of the bureaucratic machine, these escape valves can provide crucial relief from its heartless and implacable nature. Every voicemail system needs the option to press zero. Escape valves may take the form of appeals processes, or higher-level administrators who are empowered to make exceptions to the rules, or evolved cultural practices within an organization. Sometimes they might consist of nothing more than individual clerks who have the freedom to fix dumb results by breaking the rules. In some cases this is perceived as a failure—after all, making an exception to a rule in order to treat an individual fairly diminishes the qualities of predictability and control that make a bureaucratic machine so valuable to those at the top. And these pockets of discretion can also leave room for bad results such as racial discrimination. But overall they rescue bureaucracies from being completely mindless, in a way that computers cannot be (at least yet).

The Internet of Kafkaesque Things

The bottom line is that the danger is not just that (as I discussed in my prior post) we will become increasingly subject to the micro-power of bureaucracies as computer chips saturate our lives. There is also the danger that the Kafkaesque absurdities and injustices that characterize bureaucracies will be amplified at those micro levels—and without even being leavened by any of the safety valves (formal or informal) that human bureaucracies often feature.

And the situation gets even worse. Just as applying general rules to specific circumstances inevitably involves discretion, so does translating human rules and policies into computer code. As Danielle Citron details in a fascinating paper entitled "Technological Due Process," that process of translation is fraught for a variety of reasons, including:

- The alien relationship between human language and computer code.

- The fact that like all translations, the process requires interpretative choices.

- The biases of programmers.

- Programmers' policy ignorance.

- The fact that real-world institutions may also have pragmatic, informal "street-level" policy practices to fix the more obvious contradictions or blind spots in a written policy, and those practices may not be reflected in computer code.

Looking as a case study at how the Medicaid program has been administered via computer rules, Citron describes the numerous problems, distortions, and injustices that resulted as the administration of the program was shifted to computers.

Overall, in a world where room for some discretion is vital for fair treatment, the mentally stiff and inflexible nature of computers threatens to extend the infuriating irrationalities of bureaucracies deep into our daily lives. But the interesting question is this: as our computers become increasingly intelligent—less mentally stiff and inflexible—what effect that will have on this situation? Some speculations on that in a future post.

Stay informed

Sign up to be the first to hear about how to take action.

By completing this form, I agree to receive occasional emails per the terms of the ACLU's privacy statement.

By completing this form, I agree to receive occasional emails per the terms of the ACLU's privacy statement.