The FBI wants to establish the precedent that the government can conscript a technology supplier to break security features they've offered to their users. In particular, the legal order obtained by the FBI (directing Apple to assist the Bureau in accessing San Bernardino shooter Syed Rizwan Farook’s phone) asks Apple to misuse their software update mechanism— a mechanism that every Apple user depends on.

But the FBI’s demand is premised on the notion that the FBI needs Apple’s help, and cannot access the encrypted data on Farook’s phone without Apple’s help. We were curious: is that actually the case? To answer requires looking at technical details of Apple’s encryption system and how it works.

The basic technological situation

The FBI can easily extract the raw data from the phone’s flash memory, but they can’t read it because the information on Farook’s phone is protected by encryption that ultimately depends on a strong key we can call the “unlocking key.” This key is so strong that it would take the FBI longer than the age of the universe to crack it using “brute force” methods (i.e. guessing every possible key)—even with all the computers in the world dedicated to the task.

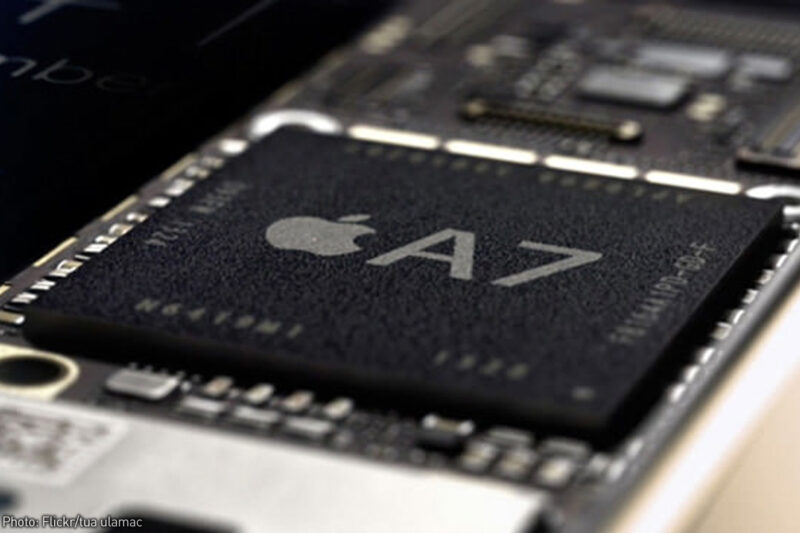

Of course, iPhone users don’t have to enter the unlocking key itself—a lengthy tangle of random digits—to open their phone. Instead, they just enter a shorter passcode. Passcodes can vary in length, but there’s a strong chance the FBI could brute-force the passcode, which is usually short and memorizeable by humans. The problem for the FBI is that the passcode by itself does not unlock the phone. Instead, the weak passcode is combined with a hard-wired “unique ID” (UID) that is embedded within the phone when it is manufactured. The UID is actually used as an encryption key itself—and like the unlocking key, it is lengthy and cannot be brute-forced. When the passcode is entered it is combined with the hard-wired UID to open the unlocking key, which unlocks the phone’s data. In other words: passcode + UID = unlocking key and access to data.

In short, the phone’s UID is everything. With it, the FBI can get what they’re asking for. Without it, they can’t.

If the FBI could extract the UID key from the phone, they could then take their own external copy of the phone’s data and bring supercomputers to bear on brute-forcing the passcode instead of using a single relatively weak phone processor. They could bypass the guessing-limit functions of Apple’s operating system without requiring Apple to create a whole new insecure operating system for them, and rapidly try combining billions of passcodes with the UID until they successfully unlock the data extracted from the phone. The FBI’s effort to conscript Apple would therefore be unnecessary.

That brings us to the question:

Is it really true that the FBI cannot access the UID key?

Certainly, preventing access to the UID is Apple's stated engineering goal. The company’s Security Guide for iOS 9 states, that the UID (and another key we don’t need to worry about)

are AES 256-bit keys fused…into the application processor…during manufacturing. No software or firmware can read them directly; they can see only the results of encryption or decryption operations performed by dedicated AES engines implemented in silicon using the UID…as a key.

The fuses in Apple’s chips are just like the fuses in your home's wiring—electrical connections designed to “blow” when too much current flows through them. Imagine 256 fuses in a row. For each fuse, flip a coin. If it comes up heads, send enough current across the fuse to make it blow. If it comes up tails, leave the fuse alone. This series of blown or not-blown fuses—in minature, inside the chip during Apple’s manufacturing process—constitutes the UID key. Apple says that no one has a record of that UID key for any phone.

While the chip is designed to not expose the UID key via its electrical interfaces, there is actually an array of physical fuses inside of it. Through processes that are already available to commercial and academic users, the FBI could etch off the surface of the chip, and then use a powerful microscope to inspect the silicon inside. If this succeeds in exposing the UID, then the device itself is no longer needed, and the FBI can proceed to crack the unlocking key by brute-forcing the passcode on their own hardware.

It would be extremely surprising if the FBI—or at least the NSA—did not already have the equipment and knowledge to perform this work. We know the FBI has used electron microscopes at least since 2002, for example. Certainly we’re not suggesting an expansion of NSA participation in domestic law enforcement investigations, but surely before any court considers setting such a dangerous precedent it should be certain that there aren’t less destructive alternatives.

It’s possible that the FBI has access to such capabilities but doesn’t want to advertise them because the facts around this case present them with a golden opportunity to establish a powerful new legal precedent—that they can force a technology provider to abuse their software update mechanism at the request of the government. That’s a precedent that will give them a significant new power.

In the end, course, whether or not there is a way for the FBI to get that UID and Farook’s data without Apple, the order should still be overturned for the deeper reasons that our colleague Alex Abdo and others have laid out.

But, before seeking a radical and sweeping dangerous new legal precedent, the FBI should explain why they are unable to extract the UID. Not only journalists but also judges should press the FBI on whether techniques such as those suggested above—or any others—could offer an alternative to the sweeping new power the Bureau is seeking.